What Is an Incident Management System?

An incident management system (IMS) is a structured framework combining processes, roles, and tools that allows organizations to detect, log, triage, resolve, and review unplanned disruptions to services, systems, or operations. When something breaks, an IMS ensures you respond with speed and coordination rather than chaos.

Whether you are a DevOps team scrambling to restore an API, a cybersecurity team containing a breach, or an operations manager dealing with a workplace injury report, the underlying need is the same: a repeatable, documented system that gets the right people moving in the right direction fast.

A well-implemented incident management system goes well beyond putting out fires. It shortens the time between detection and resolution, creates an audit trail for compliance, and generates the data you need to stop the same fire from starting again.

Incident vs. Problem vs. Change: What’s the Difference?

These three terms are often used interchangeably, but they represent distinct concepts in ITIL and broader service management. Conflating them leads to misrouted tickets, unclear ownership, and repeated disruptions.

| Term | What It Means | Example |

| Incident | An unplanned interruption or degradation in the quality of an IT service or operational process that requires immediate attention. | The payment gateway goes down at 11 PM on a Friday. |

| Problem | The underlying root cause of one or more incidents. Problems are investigated over time on a separate timeline from the urgency of an active outage. | Repeated payment gateway outages traced back to a memory leak in a third-party library. |

| Change | A planned addition, modification, or removal of anything that could affect IT services. Changes follow an approval and scheduling process. | Deploying a patch to fix the memory leak causing payment gateway outages. |

Real-World Example: What a Good (and Bad) Incident Response Looks Like

The difference between a one-hour outage and a six-hour outage often comes down to process, not capability. Here is what that gap looks like in practice.

| Bad Incident Response | Good Incident Response |

| Nobody knows who owns the incident. Three engineers are all investigating independently, duplicating work. | An Incident Commander is assigned within five minutes. Roles are clear before the first Slack message is sent. |

| Updates go silent. Customers are tweeting about the outage before the team even has a status page post. | A Communication Lead posts a holding message to the status page within ten minutes of detection, then updates every 30 minutes. |

| The fix is deployed without testing in a parallel environment. It breaks something else. | The Resolver validates the fix in staging, then deploys with a rollback plan ready. |

| No postmortem is run. The same issue recurs six weeks later. | A blameless postmortem is completed within 48 hours. The root cause is documented and a permanent fix is scheduled as a change request. |

Why Every Team Needs an Incident Management System (Yes, Even Yours)

Many teams believe incident management is a concern only for large enterprises or organizations running critical infrastructure. That assumption is expensive. The cost of an unmanaged incident compounds faster than most leaders realize, and shows up in ways that go well beyond a single outage.

The Hidden Cost of Unmanaged Incidents

Downtime is the number you see. The numbers you don’t see are often larger.

Customer trust erodes quietly. A user who experiences an unacknowledged outage doesn’t send a complaint; they switch to a competitor. According to industry research, organizations that fail to communicate proactively during incidents see significantly higher churn in the 30 days following a disruption than those that maintain transparent status updates.

Regulatory exposure grows with every undocumented incident. In markets like India, the UAE, and Saudi Arabia, regulators increasingly require evidence of incident detection, response timelines, and post-incident reporting. A company without an IMS is a company without an audit trail, and that gap is costly when a regulator comes knocking.

Engineering velocity suffers. When incidents are properly reviewed, the same root causes resurface. Teams spend their capacity firefighting rather than building. Studies consistently show that high-performing engineering teams spend significantly less time on unplanned work, and that gap is almost entirely explained by mature incident management practices.

Leadership visibility disappears. Without structured data on mean time to detect (MTTD), mean time to resolve (MTTR), and incident volume by category, it is impossible to make informed decisions about where to invest in reliability, staffing, or tooling.

5 Key Benefits of a Structured Incident Management System

Organizations that move from ad-hoc firefighting to structured incident management consistently report:

1. Faster resolution: Clear ownership means no one waits for someone else to act

2. Clear accountability: Every incident has a named owner and an audit trail

3. Compliance-ready documentation: Meet CERT-In, NESA, NCA, and ISO 27001 requirements

4. Fewer repeat incidents: Post-incident reviews unearth root causes and prevent recurrence

5. Stakeholder trust: Proactive communication keeps customers and leadership informed

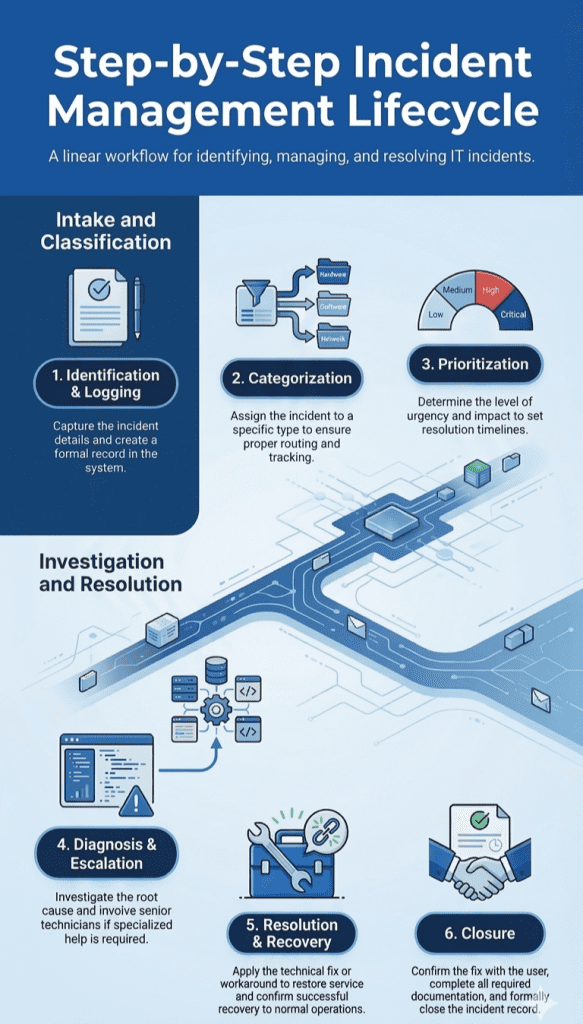

The Incident Management Process: 6 Steps That Actually Work

Incident management is a sequence of coordinated steps, each with defined inputs, outputs, and owners. The following six-step process is adapted from ITIL best practices and refined for modern teams running hybrid or cloud-native environments.

Step 1: Detection and Identification

An incident cannot be managed until it is known. Detection sources fall into three categories: automated monitoring alerts, internal team reports, and external customer reports. Best-in-class teams weight these in that order, since automated detection should catch the majority of incidents before a user ever experiences them.

Modern monitoring tools, whether infrastructure-level (CPU, memory, disk), application performance monitoring (APM), or synthetic monitoring, can trigger alerts within seconds of an anomaly. Teams should define alert thresholds that balance sensitivity (catching real problems early) with specificity (avoiding alert fatigue).

Internal detection relies on staff knowing where to report issues and how quickly. This requires a clear incident intake channel, typically a dedicated Slack channel, email alias, or ticketing form that anyone in the organization can use.

| Pro Tip: Don’t wait for a customer to tell you your service is down. By the time a user submits a support ticket, your MTTR clock has been running for minutes or even hours. Automated detection is a production-critical requirement for any team running live systems. |

Step 2: Logging and Ticket Creation

Every incident must have a written record. No exceptions. A verbal incident, even one resolved in ten minutes, that goes undocumented is a missed learning opportunity, a compliance gap, and a liability if the issue recurs or escalates.

The incident log or ticket should capture, at minimum: the date and time of detection, the reporter’s name, the affected system or service, the initial severity classification, and a plain-language description of the impact. This becomes the single source of truth for all subsequent response activities.

Your incident management tools should automate as much of this as possible, auto-populating fields from monitoring alerts, linking to runbooks, and routing the ticket to the appropriate team based on the service affected. The goal is to reduce the cognitive load on the responder during the most stressful part of the incident. (See the Incident Management Tools section for guidance on what to look for in a platform.)

Step 3: Categorization and Prioritization

All incidents are not equal. A priority framework ensures that your highest-impact issues get your best resources immediately, while lower-priority incidents are queued and tracked without disrupting active response.

The most widely adopted model uses four priority levels, each tied to defined response and resolution targets. ITIL provides the conceptual framework, but the specific thresholds should be calibrated to your organization’s service level agreements (SLAs) and business context.

| Priority | Definition | Example | Target Response Time |

| P1: Critical | Complete service outage or severe degradation affecting all users or core business operations. Revenue impact is immediate. | Payment processing is down. No transactions can be completed. | Immediate, within 15 minutes |

| P2: High | Significant degradation affecting a large subset of users or a critical function. A workaround may exist but is impractical at scale. | Login is intermittently failing for 30% of users. | Within 30 minutes |

| P3: Medium | Partial disruption affecting a non-critical function or a limited number of users. A workaround exists and is easy to apply. | Report export is producing incorrect date formatting for a small number of accounts. | Within 4 hours |

| P4: Low | Minor issue with minimal user impact. No workaround needed. Can be addressed in normal sprint planning. | Dashboard widget displays a tooltip in the wrong position on mobile browsers. | Within 24 to 48 hours |

Step 4: Investigation and Diagnosis

Once the incident is logged and prioritized, the investigation phase begins. A named Resolver, a technical owner with context on the affected system, takes ownership of diagnosis. The Incident Commander coordinates the response, ensures the Resolver has what they need, and manages escalation if progress stalls.

Escalation criteria should be pre-defined, not improvised under pressure. If a P1 has no resolution path within 30 minutes, it escalates to senior engineering or an on-call manager. If investigation reveals a security dimension, it escalates to the security team. These triggers should be in a runbook.

Communication to stakeholders runs in parallel throughout this phase and does not wait for a resolution. Internal stakeholders (product, management, customer success) and external stakeholders (customers, partners) have different information needs and different cadences, but both must be served.

| Pro Tip: Keep your status page updated throughout the investigation, even when you have nothing new to report. A message that says ‘Investigation is ongoing, next update in 30 minutes’ is infinitely better than silence. Silence reads as indifference. |

Step 5: Resolution and Recovery

Resolution and recovery are two different outcomes. Resolution means the root cause has been addressed or a stable workaround is in place. Recovery means the service is fully restored to its normal operating state and verified to be performing correctly.

Distinguishing between a workaround and a permanent fix is critical. A workaround restores service quickly but does not eliminate the underlying cause; it buys time. A permanent fix addresses the root cause and should be tracked as a change request with proper testing and approval. Treating a workaround as a permanent fix is one of the leading causes of repeat incidents.

Before closing an incident, verify: Is the affected service performing within normal parameters? Have all impacted users been notified of restoration? Has the incident ticket been updated with a resolution summary? Has a post-incident review been scheduled if the incident was P1 or P2?

Step 6: Post-Incident Review (Don’t Skip This)

The post-incident review (PIR), sometimes called a postmortem, is the step most teams skip, and the one that delivers the most long-term value. Rather than a blame session, it is a structured, blameless analysis of what happened, why it happened, and what the organization will do differently.

Google’s Site Reliability Engineering (SRE) handbook popularized the concept of the blameless postmortem: the goal is to understand system failures, not to find a person to hold responsible. When teams feel safe to be honest about what went wrong, postmortems unearth actionable insights rather than defensive summaries.

For P1 and P2 incidents, a postmortem should be completed within 48 to 72 hours while the details are fresh. The output should include documented action items with owners and due dates, going well beyond a narrative of what occurred.

5-Question Blameless Postmortem Template

- What exactly happened, and what was the customer or business impact?

- What was the root cause: the underlying system or process failure that allowed this to occur, not just the surface symptom?

- How did we detect the incident, and could we have detected it faster? What monitoring or alerting gaps exist?

- What did we do well during the response, and what slowed us down?

- What specific, measurable actions will we take to prevent this class of incident from recurring, and who owns each action by what date?

Types of Incident Management: IT, Cybersecurity, DevOps and Beyond

Incident management spans multiple disciplines. The principles are universal, but the application varies significantly by domain. Understanding which flavor applies to your context is the first step to building a system that actually fits your team.

IT Incident Management (ITIL Framework)

IT incident management, as codified by the ITIL (Information Technology Infrastructure Library) framework, is the most widely adopted form of structured incident management globally. ITIL defines an incident as ‘an unplanned interruption to an IT service or a reduction in the quality of an IT service.’

ITIL 4, the current iteration, moves beyond the prescriptive process-heavy approach of earlier versions and focuses on value co-creation, flexibility, and integration with modern practices like DevOps and Agile. For most IT service management (ITSM) teams, ITIL 4 provides a solid conceptual foundation without mandating rigid process adherence.

| For a full breakdown of the ITIL framework, see our guide: What Is IT Service Management (ITSM)? Complete Guide. |

Cybersecurity Incident Management

Cybersecurity incident management deals specifically with breaches, ransomware attacks, data leaks, phishing campaigns, insider threats, and any event that compromises the confidentiality, integrity, or availability of data or systems. The stakes are higher, the timelines are tighter, and the regulatory consequences of poor response are severe.

The NIST Cybersecurity Framework and ISO 27001 both provide widely adopted structures for cybersecurity incident response. NIST’s incident response lifecycle covers Preparation, Detection and Analysis, Containment/Eradication/Recovery, and Post-Incident Activity, mapping closely to the general six-step process above, with additional rigor around evidence preservation and forensic investigation.

Organizations operating in the UAE and Saudi Arabia face particularly stringent cybersecurity incident reporting obligations. The UAE’s National Electronic Security Authority (NESA) and the Saudi National Cybersecurity Authority (NCA) both mandate defined incident classification, notification timelines, and documentation standards.

DevOps and SRE Incident Management

Site Reliability Engineering (SRE) teams, a discipline pioneered at Google, treat incident management as a core engineering function with the same rigour applied to feature development. The priorities are speed, automation, and a culture of continuous learning.

SRE incident management centers on on-call rotations with clearly defined escalation paths, service level objectives (SLOs) and error budgets that make acceptable reliability explicit, and blameless postmortems as a default cultural practice. Tooling matters enormously in this context: alert routing, runbook automation, and incident timeline generation should all be handled by the platform, freeing engineers to focus on diagnosis rather than administration.

For DevOps and SRE teams, the measure of a good incident management system is simple: does it reduce MTTR? Tooling that adds friction, such as manual ticket creation, unclear ownership, or siloed communication, works against that goal.

Workplace/Safety Incident Management

Physical incident management is a discipline frequently overlooked in technology-focused conversations about IMS, but for organizations in manufacturing, construction, healthcare, logistics, and facilities management, it is arguably more consequential than IT incident management.

Workplace safety incident management covers injuries, near-misses, equipment failures, environmental hazards, and any physical event that endangers personnel or property. It follows similar principles to IT incident management, covering detection, logging, investigation, resolution, and review, with additional requirements around regulatory reporting (OSHA in the US, DOSH in Malaysia, the Ministry of Labour in India and the UAE), witness statements, and corrective action tracking.

For organizations operating in India, Malaysia, and Indonesia, markets where manufacturing and industrial sectors employ tens of millions of workers, a structured safety IMS drives workforce safety outcomes directly rather than serving as a compliance checkbox. Yet few technology vendors in these markets have built IMS platforms that adequately serve the physical safety use case alongside IT and cybersecurity.

Incident Management Tools: What to Look For

The right incident management tools actively accelerate resolution rather than simply recording what happened. A good IMS tool does the heavy lifting your team cannot do manually at scale: routing alerts to the right people at 3 AM, auto-generating incident timelines for postmortems, and producing the compliance reports your auditor needs in minutes rather than days.

Core Features Every Incident Management Tool Should Have

| Feature | Why It Matters |

| Automated Alerting and Detection | Integration with your monitoring stack (cloud infrastructure, APM, synthetic monitoring) so incidents are detected and logged automatically, before a customer reports them. Alert de-duplication and noise reduction are critical to prevent on-call fatigue. |

| Centralized Incident Log | A single, searchable repository of every incident, open, in progress, and resolved. Without this, historical analysis is impossible and compliance documentation becomes a manual nightmare. |

| Severity and Priority Routing | Automatic classification and routing of incidents based on predefined severity rules ensures P1 incidents are never stuck in a general queue. Routing logic should be configurable without engineering involvement. |

| Escalation and On-Call Management | Built-in on-call scheduling with escalation policies ensures the right person is always reachable. If the primary responder does not acknowledge within a defined window, the system escalates automatically. |

| Stakeholder Communication | Templates for internal and external communications, including status page integration, mean your Communication Lead can post updates in seconds rather than drafting from scratch under pressure. |

| Post-Incident Review Workflow | A structured PIR workflow built into the platform ensures postmortems are completed consistently, action items are tracked, and learnings are documented rather than lost in a shared doc no one can find. |

| Compliance Reporting and Audit Trail | Auto-generated reports showing incident timelines, response actions, resolution times, and communication logs are essential for demonstrating compliance with CERT-In, NESA, NCA, ISO 27001, and other frameworks. |

| ITSM and CMDB Integration | Bi-directional integration with your ITSM platform and configuration management database (CMDB) ensures incidents are linked to affected assets and changes are properly tracked. |

Incident Management Tool Categories

The incident management tooling landscape is broad. Understanding the major categories helps you identify where your current gaps are and what type of solution will close them.

On-Call and Alert Management platforms specialize in getting the right person notified fast. They excel at alert routing, on-call scheduling, escalation policies, and incident timeline generation. They are purpose-built for speed and are particularly popular with DevOps and SRE teams.

ITSM platforms provide a broader service management framework. Incident management is one module within a larger suite that includes problem management, change management, asset management, and CMDB. These platforms are a strong fit for mature IT operations teams that need end-to-end ITIL alignment.

Unified Observability and Incident Management platforms integrate monitoring and incident management into a single surface. Detection, alerting, and incident creation are tightly coupled with performance data, reducing the time from anomaly to action.

Cybersecurity Incident Response platforms are made for security operations centers (SOCs). They incorporate playbook automation, threat intelligence integration, and forensic evidence management alongside standard incident management workflows.

How to Choose the Right Incident Management Tool: A Buyer’s Checklist

Use this checklist to evaluate any IMS platform against your organization’s specific requirements before committing to a vendor.

Incident Management Tool Evaluation Checklist

- Does it support automated alerting across cloud, SaaS, and on-premises systems?

- Can it route incidents by severity and team ownership automatically?

- Does it integrate with your existing ITSM, Slack, or ticketing tool?

- Does it offer real-time status page communication for customers?

- Is on-call scheduling and escalation built in or requires a separate tool?

- Can it generate audit-ready compliance reports on demand (CERT-In, NESA, NCA)?

- Does it include structured post-incident review workflows?

- Is the pricing transparent and scalable as your team grows?

- Does the vendor offer implementation support and regional data residency options?

For a detailed comparison of leading platforms, see our guide: Best ITSM Tools for Growing Businesses: 2026 Comparison.

Incident Management Best Practices: What Separates Good Teams from Great Ones

Having a process on paper and executing it under pressure are two different things. The teams that consistently handle incidents well are the ones with the clearest habits and the most honest culture, regardless of budget or headcount.

Here is what best-in-class looks like in practice.

1. Define Roles Before the Incident Happens

The worst time to decide who owns an incident is in the middle of one. When every second counts, ambiguity about who is in charge creates delays, and delays compound into extended outages.

Create a RACI chart for incident response when nothing is on fire. Every incident should have three clearly named roles assigned before any responder picks up the phone:

Incident Commander: Owns the overall resolution, coordinates the response, manages escalation, and makes go/no-go decisions on remediation steps.

Communication Lead: Owns all stakeholder updates, writes and posts internal and external communications, manages the status page, and serves as the single voice to leadership and customers.

Resolver: Owns the technical diagnosis and fix, with deep context on the affected system, working on the problem while the Incident Commander handles everything else.

These roles can and often do rotate. What matters is that when an incident is declared, the roles are filled within minutes rather than improvised over the course of an hour.

2. Communicate Early, Communicate Often

Internal communication (what the team knows) and external communication (what customers and leadership know) must run in parallel, not sequentially. Waiting until you have a resolution to communicate is a guaranteed way to turn a contained incident into a trust crisis.

The Communication Lead’s first job is to post a holding message within ten minutes of incident declaration, even if the only information available is that the team is investigating. Use a standard template so this step requires no creative energy under pressure:

Templated Status Update:

- [TIME] | [Incident Title]

- Status: Investigating

- Impact: [Brief description of what users are experiencing]

- Affected Services: [List affected services]

- Our team is aware of this issue and is actively investigating. We will provide an update by [TIME + 30 minutes].

- For urgent support, contact [support channel].

Update on this cadence: every 30 minutes for P1, every hour for P2, until resolution. Customers who are kept informed are significantly more forgiving than those who receive silence followed by a single ‘the issue is resolved’ post.

3. Run Blameless Postmortems

Incidents expose system failures. The blameless postmortem, a practice popularized by Google’s SRE team and since adopted by high-performing engineering organizations globally, is grounded in this principle: the goal of every post-incident review is to improve systems and processes, never to assign blame to individuals.

When engineers fear that a postmortem will result in personal consequences, they write defensive summaries that protect their reputation rather than honest analyses that protect the system. The result is a document that says ‘human error’ where the real answer is ‘a system that made human error inevitable.’

Organizations that consistently run blameless postmortems and follow through on action items resolve incidents 40% faster within six months. The gains come from systematically removing the root causes that were driving repeats, rather than from any improvement in individual performance.

| Pro Tip: Use the five-question template above, require action items with named owners and specific due dates, share postmortems internally (with optional redaction for sensitive details), and track action item completion in your incident management platform. |

4. Track Metrics That Actually Matter

Incident management generates data. That data is only valuable if you track the right metrics and act on the trends they reveal. Vanity metrics, like the total number of incidents created, tell you nothing useful. The following five metrics, tracked consistently, give you a clear picture of your incident management maturity.

| Metric | What It Measures | Good Benchmark |

| MTTD: Mean Time to Detect | The average time between when an incident begins and when it is detected by your team. A high MTTD means your monitoring is insufficient or alert thresholds are misconfigured. | Under 5 minutes for P1 (automated detection) |

| MTTR: Mean Time to Resolve | The average time from incident detection to full service restoration. This is the headline metric for incident management performance and the one most directly tied to business impact. | Under 1 hour for P1; under 4 hours for P2 |

| MTTA: Mean Time to Acknowledge | The average time from alert firing to a responder acknowledging the incident. High MTTA often signals on-call fatigue, poor escalation paths, or alert noise drowning out real incidents. | Under 10 minutes for P1 |

| Incident Volume by Category | The breakdown of incidents by type (infrastructure, application, security, human error, third-party). Trends in this metric reveal where your reliability investment should be directed. | Declining volume in repeat categories over 6 months |

| Repeat Incident Rate | The percentage of incidents caused by a root cause that has occurred before. A high repeat rate is a direct indicator that postmortems are not generating actionable follow-through. | Under 10% repeat rate |

Incident Management Compliance: What Businesses in Asia and the Middle East Need to Know

| India | CERT-In Incident Reporting RequirementsThe Indian Computer Emergency Response Team (CERT-In) directions, effective from June 2022, mandate that organizations report cybersecurity incidents to CERT-In within six hours of detection. Covered incident types include data breaches, ransomware attacks, unauthorized access to IT systems, and attacks on critical infrastructure. Organizations must maintain logs of all ICT systems for 180 days within Indian jurisdiction and provide these logs to CERT-In on demand. An incident management system with automated logging, time-stamped audit trails, and rapid report generation is essential for compliance. Failure to comply can result in imprisonment of up to one year for the responsible person-in-charge. |

| UAE | NESA and IA ControlsThe UAE National Electronic Security Authority (NESA), now operating under the Cybersecurity Council, has established a set of Information Assurance Standards (IAS) that includes specific requirements for incident management processes. Organizations designated as critical information infrastructure (CII) are required to have documented incident response plans, defined incident classification schemes, and regular incident response drills. The UAE Cybersecurity Law (Federal Decree-Law No. 34 of 2021) further strengthens obligations around data breach notification and incident documentation. The Dubai Electronic Security Centre (DESC) issues additional requirements for organizations operating in the Dubai Government context. |

| Saudi Arabia | NCA Essential Cybersecurity Controls (ECC)The Saudi National Cybersecurity Authority (NCA) Essential Cybersecurity Controls (ECC-1:2018) establish a comprehensive set of requirements for organizations operating in the Kingdom. Domain 3 of the ECC, covering Cybersecurity Operations, includes detailed requirements for incident and threat management: detection, classification, response, recovery, and post-incident review. Organizations in critical sectors (energy, finance, telecommunications, and government) face additional requirements under sector-specific regulations. The NCA also mandates that organizations conducting business in Saudi Arabia store incident-related data within the Kingdom’s borders, making data residency a key criterion when selecting IMS tooling. |

| Malaysia | PDPA AlignmentMalaysia’s Personal Data Protection Act (PDPA) 2010, along with its ongoing reform process under the PDPA Amendment Bill, establishes obligations around the handling of personal data breaches. While Malaysia’s PDPA does not yet mandate breach notification to regulators on the same timeline as GDPR or CERT-In, the proposed amendments are expected to introduce mandatory breach notification within 72 hours of discovery. Separately, Bank Negara Malaysia (BNM) and the Securities Commission Malaysia impose incident reporting requirements on financial institutions. Organizations in Malaysia should ensure their IMS is capable of generating the timeline documentation required for both regulatory and insurance purposes. |

| Indonesia | BSSN GuidelinesIndonesia’s National Cyber and Crypto Agency (BSSN) has published technical guidelines for cybersecurity incident response that apply to government institutions and are increasingly adopted as best practice standards for private sector organizations. Indonesia’s Government Regulation No. 71 of 2019 also establishes obligations around the security and management of electronic systems, including incident reporting for strategic electronic systems. The Personal Data Protection Law (UU PDP), passed in 2022, introduces data breach notification requirements with a 14-day notification window to the supervisory authority and to affected data subjects. Organizations operating in Indonesia should align their IMS documentation and reporting capabilities to both BSSN guidelines and UU PDP requirements. |

Frequently Asked Questions About Incident Management Systems

What is the difference between incident management and problem management?

Incident management is reactive: it focuses on restoring normal service operation as quickly as possible following an unplanned disruption, with speed as the primary goal. Problem management is investigative: it focuses on identifying and eliminating the root cause of one or more incidents to prevent recurrence, with permanence as the goal.

What are the 5 stages of incident management?

While this article outlines six steps (with Step 3 split into categorization and prioritization as separate activities), the classic five-stage model covers: (1) Identification, detecting and logging the incident; (2) Categorization and Prioritization, classifying by type and severity; (3) Investigation and Diagnosis, root cause analysis and escalation; (4) Resolution and Recovery, fixing the issue and restoring service; and (5) Closure and Review, post-incident documentation and postmortem.

What is ITIL incident management?

ITIL incident management is the incident management discipline as defined by the Information Technology Infrastructure Library (ITIL) framework, the most widely adopted set of IT service management best practices globally. ITIL defines an incident as any unplanned interruption or quality reduction of an IT service and prescribes a structured lifecycle for detecting, logging, categorizing, prioritizing, investigating, resolving, and closing incidents.

How do I choose the right incident management tool?

Start with your use case: are you primarily managing IT incidents, cybersecurity events, on-call escalations, or a combination? Then evaluate tools against the core features checklist above, covering automated alerting, centralized logging, severity routing, escalation management, stakeholder communication, PIR workflows, compliance reporting, and ITSM integration.

What is a major incident in ITIL?

In ITIL, a major incident is the highest-severity classification, typically equivalent to P1 in a priority framework. ITIL defines it as an incident that causes significant business impact and requires a dedicated major incident management process with a separate team, escalated communication cadence, and a formal post-incident review.